▶️ The Cognitive Bias Codex (navigabile)

🔗 https://medium.com/better-humans/cognitive-bias-cheat-sheet-55a472476b18

🔗 https://en.wikipedia.org/wiki/List_of_cognitive_biases

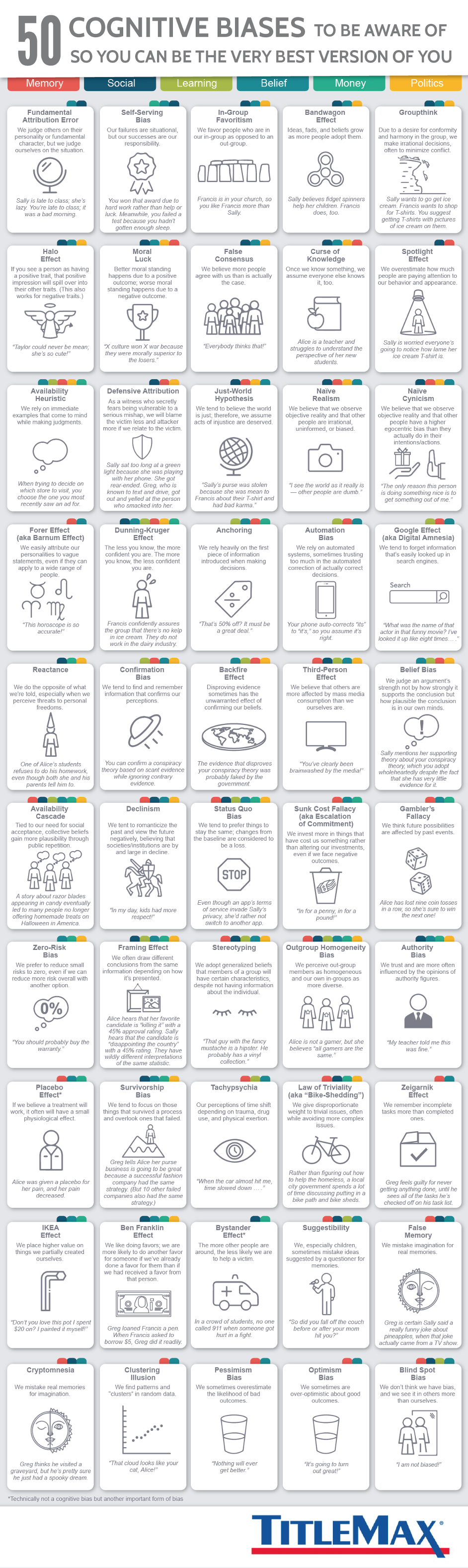

- Fundamental Attribution Error: We judge others on their personality or fundamental character, but we judge ourselves on the situation.

- Self-Serving Bias: Our failures are situational, but our successes are our responsibility.

- In-Group Favoritism: We favor people who are in our in-group as opposed to an out-group.

- Bandwagon Effect: Ideas, fads, and beliefs grow as more people adopt them.

- Groupthink: Due to a desire for conformity and harmony in the group, we make irrational decisions, often to minimize conflict.

- Halo Effect: If you see a person as having a positive trait, that positive impression will spill over into their other traits. (This also works for negative traits.)

- Moral Luck: Better moral standing happens due to a positive outcome; worse moral standing happens due to a negative outcome.

- False Consensus: We believe more people agree with us than is actually the case.

- Curse of Knowledge: Once we know something, we assume everyone else knows it, too.

- Spotlight Effect: We overestimate how much people are paying attention to our behavior and appearance.

- Availability Heuristic: We rely on immediate examples that come to mind while making judgments.

- Defensive Attribution: As a witness who secretly fears being vulnerable to a serious mishap, we will blame the victim less if we relate to the victim.

- Just-World Hypothesis: We tend to believe the world is just; therefore, we assume acts of injustice are deserved.

- Naïve Realism: We believe that we observe objective reality and that other people are irrational, uninformed, or biased.

- Naïve Cynicism: We believe that we observe objective reality and that other people have a higher egocentric bias than they actually do in their intentions/actions.

- Forer Effect (aka Barnum Effect): We easily attribute our personalities to vague statements, even if they can apply to a wide range of people.

- Dunning-Kruger Effect: The less you know, the more confident you are. The more you know, the less confident you are.

- Anchoring: We rely heavily on the first piece of information introduced when making decisions.

- Automation Bias: We rely on automated systems, sometimes trusting too much in the automated correction of actually correct decisions.

- Google Effect (aka Digital Amnesia): We tend to forget information that’s easily looked up in search engines.

- Reactance: We do the opposite of what we’re told, especially when we perceive threats to personal freedoms.

- Confirmation Bias: We tend to find and remember information that confirms our perceptions.

- Backfire Effect: Disproving evidence sometimes has the unwarranted effect of confirming our beliefs.

- Third-Person Effect: We believe that others are more affected by mass media consumption than we ourselves are.

- Belief Bias: We judge an argument’s strength not by how strongly it supports the conclusion but how plausible the conclusion is in our own minds.

- Availability Cascade: Tied to our need for social acceptance, collective beliefs gain more plausibility through public repetition.

- Declinism: We tent to romanticize the past and view the future negatively, believing that societies/institutions are by and large in decline.

- Status Quo Bias: We tend to prefer things to stay the same; changes from the baseline are considered to be a loss.

- Sunk Cost Fallacy (aka Escalation of Commitment): We invest more in things that have cost us something rather than altering our investments, even if we face negative outcomes.

- Gambler’s Fallacy: We think future possibilities are affected by past events.

- Zero-Risk Bias: We prefer to reduce small risks to zero, even if we can reduce more risk overall with another option.

- Framing Effect: We often draw different conclusions from the same information depending on how it’s presented.

- Stereotyping: We adopt generalized beliefs that members of a group will have certain characteristics, despite not having information about the individual.

- Outgroup Homogeneity Bias: We perceive out-group members as homogeneous and our own in-groups as more diverse.

- Authority Bias: We trust and are more often influenced by the opinions of authority figures.

- Placebo Effect: If we believe a treatment will work, it often will have a small physiological effect.

- Survivorship Bias: We tend to focus on those things that survived a process and overlook ones that failed.

- Tachypsychia: Our perceptions of time shift depending on trauma, drug use, and physical exertion.

- Law of Triviality (aka “Bike-Shedding”): We give disproportionate weight to trivial issues, often while avoiding more complex issues.

- Zeigarnik Effect: We remember incomplete tasks more than completed ones.

- IKEA Effect: We place higher value on things we partially created ourselves.

- Ben Franklin Effect: We like doing favors; we are more likely to do another favor for someone if we’ve already done a favor for them than if we had received a favor from that person.

- Bystander Effect: The more other people are around, the less likely we are to help a victim.

- Suggestibility: We, especially children, sometimes mistake ideas suggested by a questioner for memories.

- False Memory: We mistake imagination for real memories.

- Cryptomnesia: We mistake real memories for imagination.

- Clustering Illusion: We find patterns and “clusters” in random data.

- Pessimism Bias: We sometimes overestimate the likelihood of bad outcomes.

- Optimism Bias: We sometimes are over-optimistic about good outcomes.

- Blind Spot Bias: We don’t think we have bias, and we see it others more than ourselves.

Cognitive Biases

Let’s explore some of the most common types of cognitive biases that entrench themselves in our lives. Awareness is the best way to beat these biases, so pay careful attention to how they influence you.

1. The decoy effect. This occurs when someone believes they have two options, but you present a third option to make the second one feel more palatable. For example, you visit a car lot to consider two cars, one listed for $30,000 and the other for $40,000. At first, the $40,000 car seems expensive, so the salesman shows you a $65,000 car. Suddenly, the $40,000 car seems reasonable by comparison. This salesman is preying on your decoy bias – the decoy being the $65,000 car that he knows you won’t buy.

2. Affect heuristic. Affect heuristic is the human tendency to base our decisions on our emotions. For example, take a study conducted at Shukutoku University, Japan. Participants judged a disease that killed 1,286 people out of every 10,000 as being more dangerous than one that was 24.14% fatal (despite this representing twice as many deaths). People reacted emotionally to the image of 1,286 people dying, whereas the percentage didn’t arouse the same mental imagery and emotions.

3. Fundamental attribution error. This is the tendency to attribute situational behavior to a person’s fixed personality. For example, people often attribute poor work performance to laziness when there are so many other possible explanations. It could be the individual in question is receiving projects they aren’t passionate about, their rocky home life is carrying over to their work life or they’re burnt out.

4. The ideometer effect. This refers to the fact that our thoughts can make us feel real emotions. This is why actors envision terrible scenarios, such as the death of a loved one, in order to make themselves cry on cue and activities such as cataloging what you’re grateful for can have such a profound, positive impact on your wellbeing.

5. Confirmation bias. Confirmation bias is the tendency to seek out information that supports our pre-existing beliefs. In other words, we form an opinion first and then seek out evidence to back it up, rather than basing our opinions on facts.

6. Conservatism bias. This bias leads people to believe that pre-existing information takes precedence over new information. Don’t be quick to reject something just because it’s radical or different. Great ideas usually are.

7. The ostrich effect. The ostrich effect is aptly named after the fact that ostriches, when scared, literally bury their heads in the ground. This effect describes our tendency to hide from impending problems. We may not physically bury our heads in the ground, but we might as well. For example, if your company is experiencing layoffs, you’re having relationship issues or you receive negative feedback, it’s common to attempt to push all these problems away, rather than to face them head on. This doesn’t work and simply delays the inevitable.

8. Reactance. Reactance is our tendency to react to rules and regulations by exercising our freedom. A prevalent example of this is children with overbearing parents. Tell a teenager to do what you say because you told them so, and they’re very likely to start breaking your rules. Similarly, employees who feel mistreated or “Big Brothered” by their employers are more likely to take longer breaks, extra sick days or even steal from their company.

9. The halo effect. The halo effect occurs when someone creates a strong first impression and that impression sticks. This is extremely noticeable in grading. For example, often teachers grade a student’s first paper, and if it’s good, are prone to continue giving them high marks on future papers even if their performance doesn’t warrant it. The same thing happens at work and in personal relationships.

10. The horn effect. This effect is the exact opposite of the halo effect. When you perform poorly at first, you can easily get pegged as a low-performer even if you work hard enough to disprove that notion.

11. Planning fallacy. Planning fallacy is the tendency to think that we can do things more quickly than we actually can. For procrastinators, this leads to incomplete work, and this makes type-As overpromise and underdeliver.

12. The bandwagon effect. The bandwagon effect is the tendency to do what everyone else is doing. This creates a kind of groupthink, where people run with the first idea that’s put onto the table instead of exploring a variety of options. The bandwagon effect illustrates how we like to make decisions based on what feels good (doing what everyone else is doing), even if they’re poor alternatives

13. Bias blind spot. If you begin to feel that you’ve mastered your biases, keep in mind that you’re most likely experiencing the bias blind spot. This is the tendency to see biases in other people but not in yourself.

Bringing It All Together

Recognizing and understanding bias is invaluable because it enables you to think more objectively and to interact more effectively with other people.

(via psych-facts)